Decisions are made minute-to-minute

Decisions are made minute-to-minute

Most “recent imagery” services aren’t truly live and can’t be directed on the fly—leaving teams to act on stale views of fast-changing situations.

Satellite imagery = near-real-time, not real-time

Satellite imagery = near-real-time, not real-time

Even the fastest commercial constellations talk about near real-time with revisit cycles and delivery delays (often minutes to hours). Tasking is indirect, weather/occlusion is common, and you don’t pilot the sensor.

Traditional “drone mapping” = delayed products

Traditional “drone mapping” = delayed products

Standard UAV mapping workflows capture, upload, and process before you see orthos or 3D outputs—great for documentation, not live maneuvering or rapid retask.

Video ≠ a map

Video ≠ a map

Standard drone flights or live feeds provide only a video recording of the flight path, not a usable, geo-referenced map of the area. That leaves teams without the spatial context required for coordination, planning, or rapid decision-making.

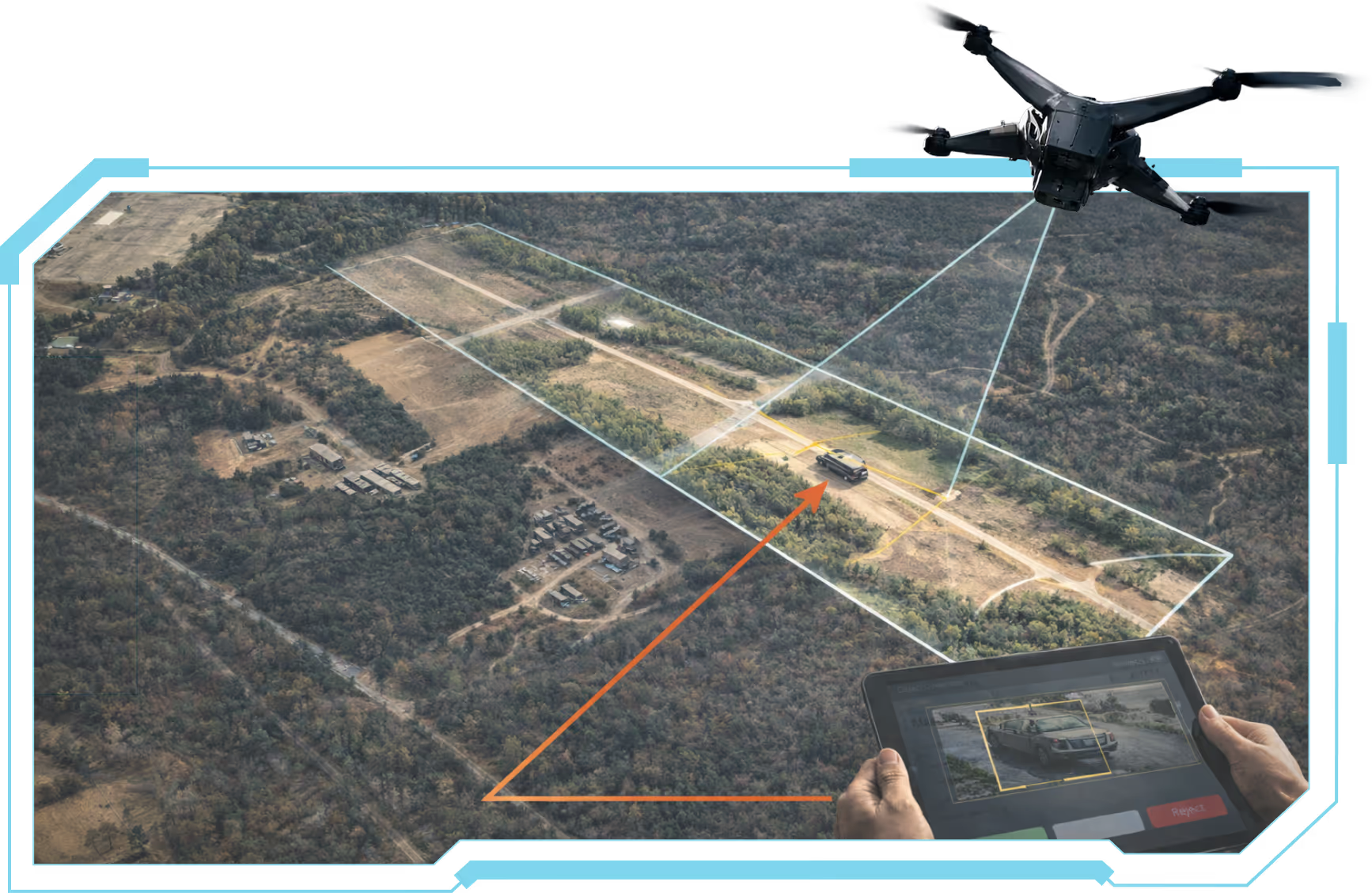

Our system delivers true real-time tactical mapping by stitching aerial imagery as it’s captured and instantly displaying it on the map. Instead of waiting for post-flight processing, operators see a live, continuously updated map of the area.

Real-time stitched imagery → Maps are generated on the fly, not after the mission.

Geo-referenced objects → Every feature is precisely located for immediate decision-making.

Actionable situational awareness → Operators gain a dynamic, evolving map that reflects changing conditions in real time.

SHARCS

Our tactical mapping system integrates seamlessly with SHARCS (Super-Human Automated Robotic Classification System), an advanced modular AI architecture for real-time object detection and classification.

With SHARCS, users gain three core capabilities:

Real-time identification and classification of objects in the field.

The ability to validate and correct AI detections instantly, improving accuracy on the spot.

User-driven tuning of machine learning models, enabling teams to refine AI performance for their specific mission needs.

Image Capture

Image Capture

Drones (or other unmanned systems) collect high-resolution imagery during flight.

Real-Time Processing

Real-Time Processing

The imagery is stitched, georeferenced and georectified in real-time.

Shared Situational Awareness

Shared Situational Awareness

Users see the live-updated map, including object detections flagged by SHARCS if needed.

Data Distribution

Data Distribution

Processed maps are streamed directly to the ATAK device, enabling rapid toggling between data from multiple sources and timeframes. Users can instantly identify environmental changes, such as new vehicle activity or infrastructure shifts, by swapping between historical and live imagery.

ATAK-LM has been rigorously tested in real-world operational environments, including:

JIFX (Joint Interagency Field Experimentation) – validating interoperability in complex, multi-user scenarios.

TReX (Test Resource Management Center Experimentation) – ensuring reliability, resilience, and mission readiness.